TABLE OF CONTENTS

Updated: 22 Apr 2025

NVIDIA A100 GPUs On-Demand

Meta has finally released its latest Llama 3.2 model designed to push the boundaries of generative AI. With improved inference capabilities and better scaling, this model is perfect for AI-driven applications across diverse platforms. It is important to note that the first official release of the Llama Stack which simplifies how developers use Llama models across various environments—single-node, on-prem, cloud, and on-device—allowing easy deployment of RAG and tools-enabled applications with built-in safety

We are excited to share this guide in which we'll walk you through how to deploy the Llama 3.2 11B model using the Llama Stack on Hyperstack!

Deploy Llama 3.2 on Hyperstack

Now, let's walk through the step-by-step process of deploying Llama 3.2 11B on Hyperstack.

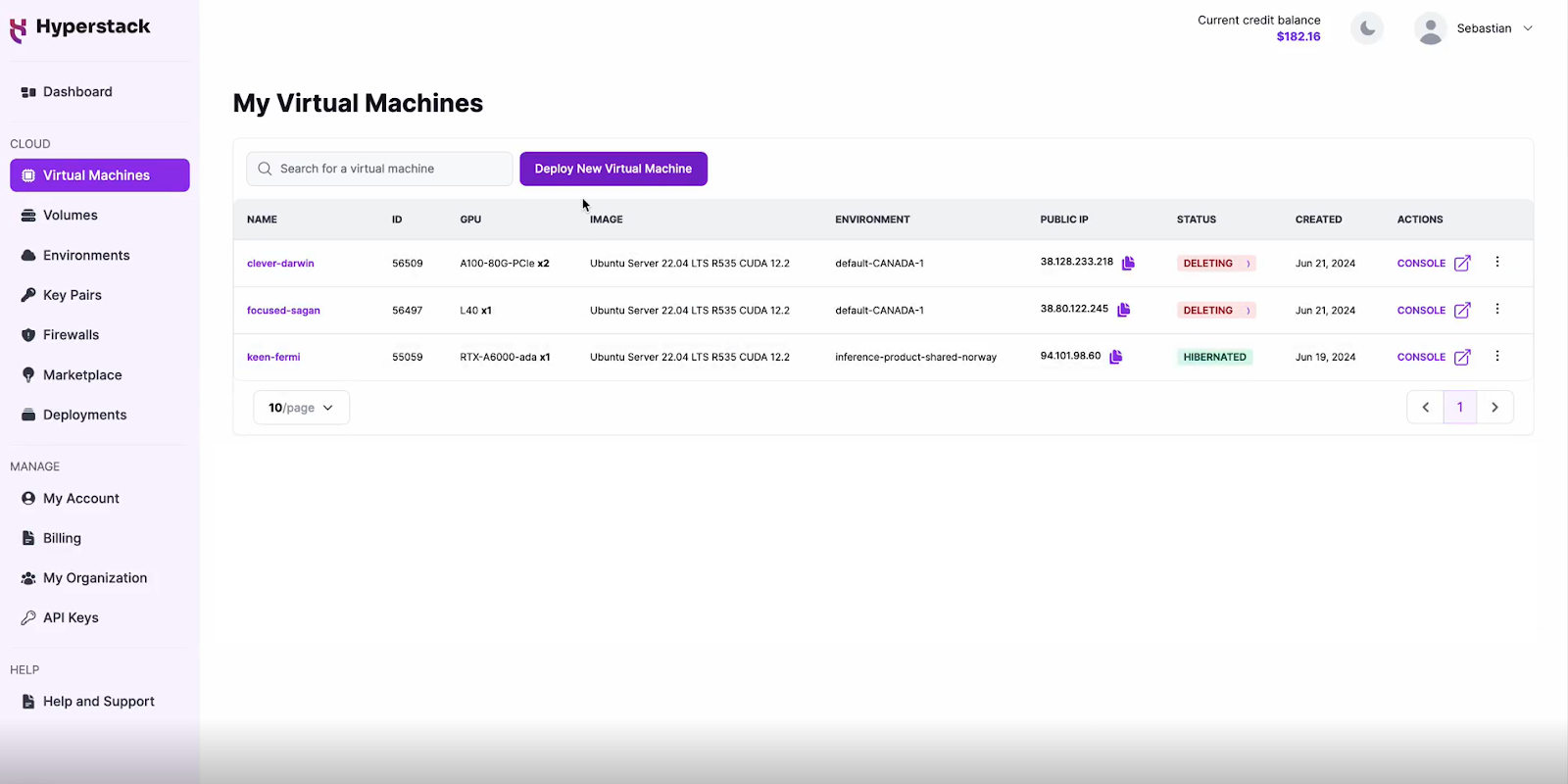

Step 1: Accessing Hyperstack

- Go to the Hyperstack website and log in to your account.

- If you're new to Hyperstack, you'll need to create an account and set up your billing information. Check our documentation to get started with Hyperstack.

- Once logged in, you'll be greeted by the Hyperstack dashboard, which provides an overview of your resources and deployments.

Step 2: Deploying a New Virtual Machine

Initiate Deployment

- Look for the "Deploy New Virtual Machine" button on the dashboard.

- Click it to start the deployment process.

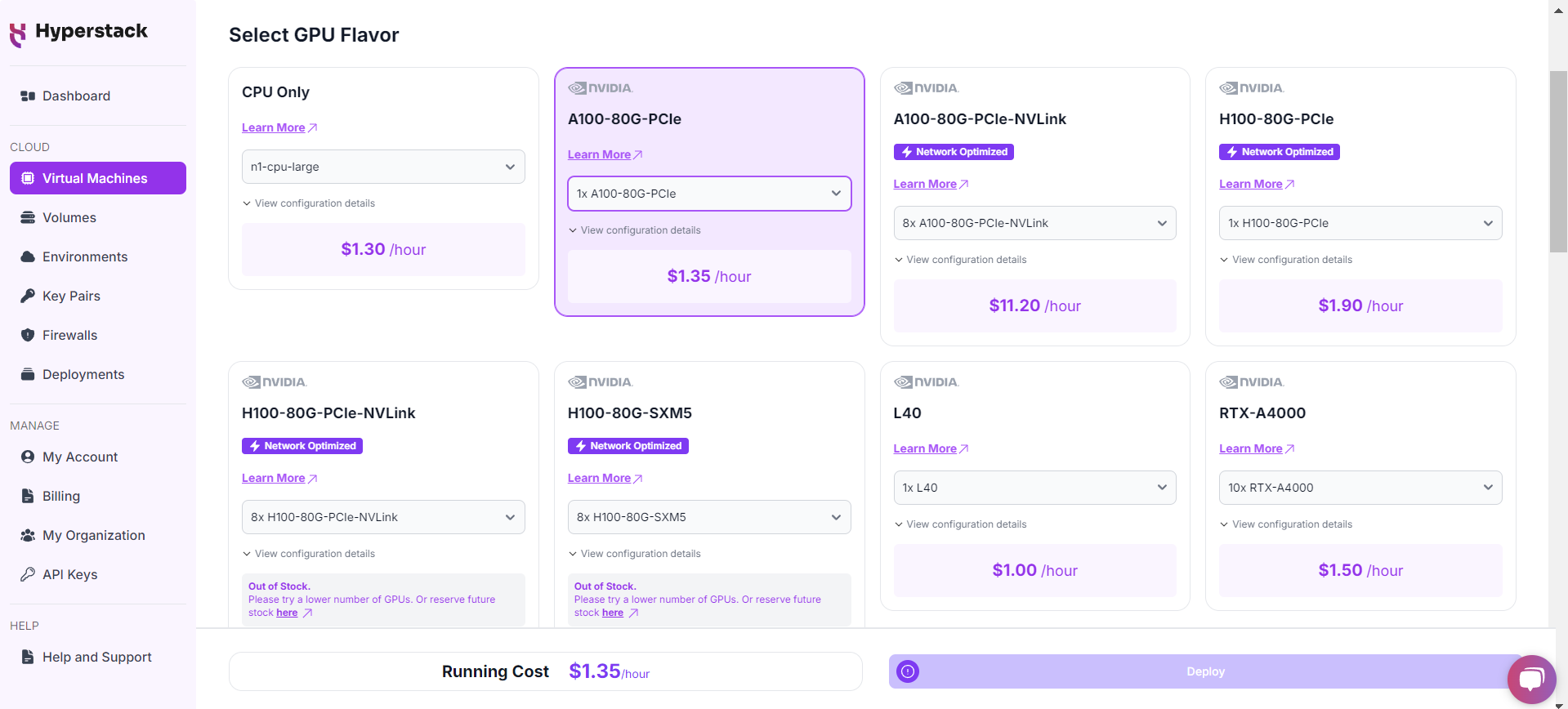

Select Hardware Configuration

- In the hardware options, choose the "1xA100-80G-PCIe" flavour. This configuration provides 1 NVIDIA A100 GPU with 80GB GPU memory, connected via PCIe, offering exceptional performance for running Llama 3.2 11B.

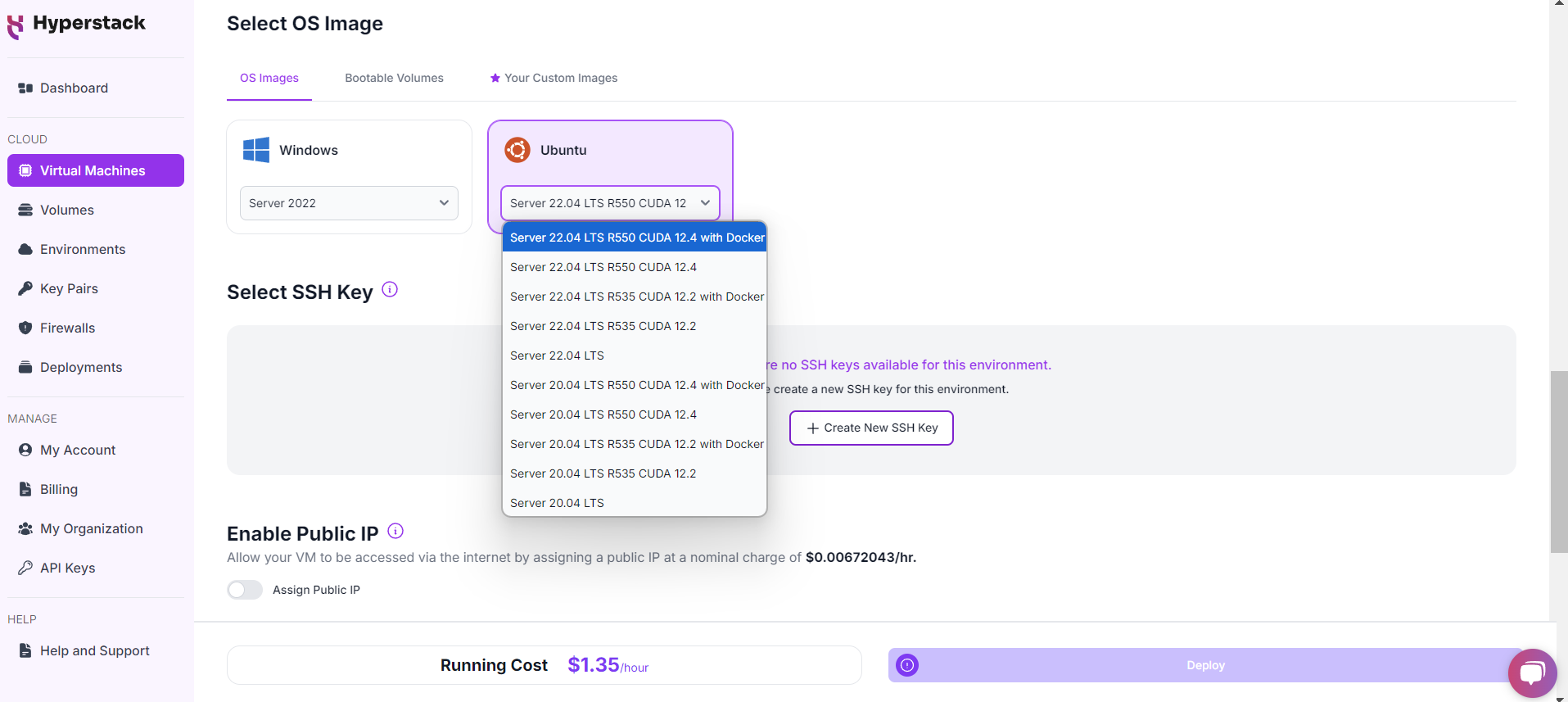

Choose the Operating System

- Select the "Ubuntu Server 22.04 LTS R550 CUDA 12.4 with Docker". This is one of our new images that we released last week! This image comes with Docker pre-installed.

Select a keypair

- Select one of the keypairs in your account. Don't have a keypair yet? See our Getting Started tutorial for creating one.

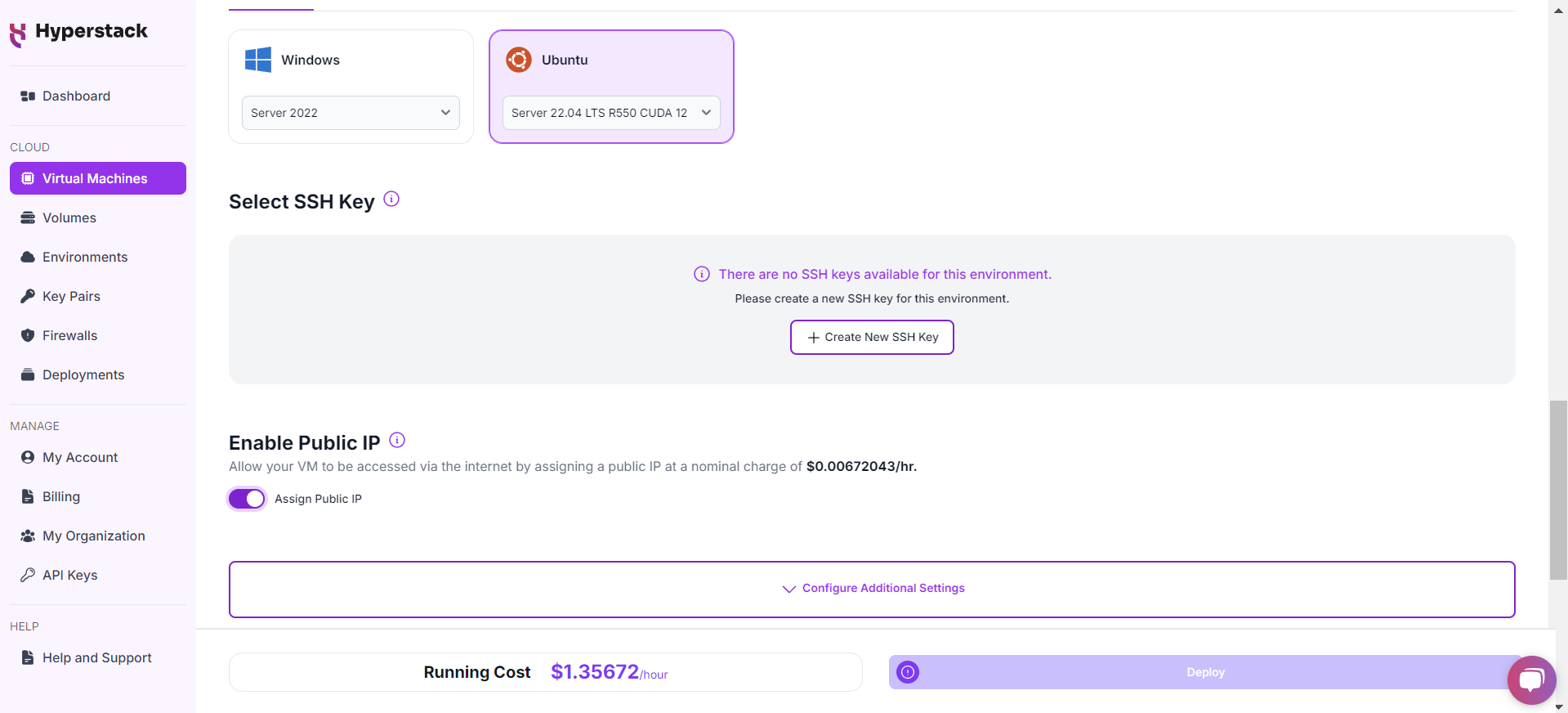

Network Configuration

- Ensure you assign a Public IP to your Virtual machine.

- This allows you to access your VM from the internet, which is crucial for remote management and API access.

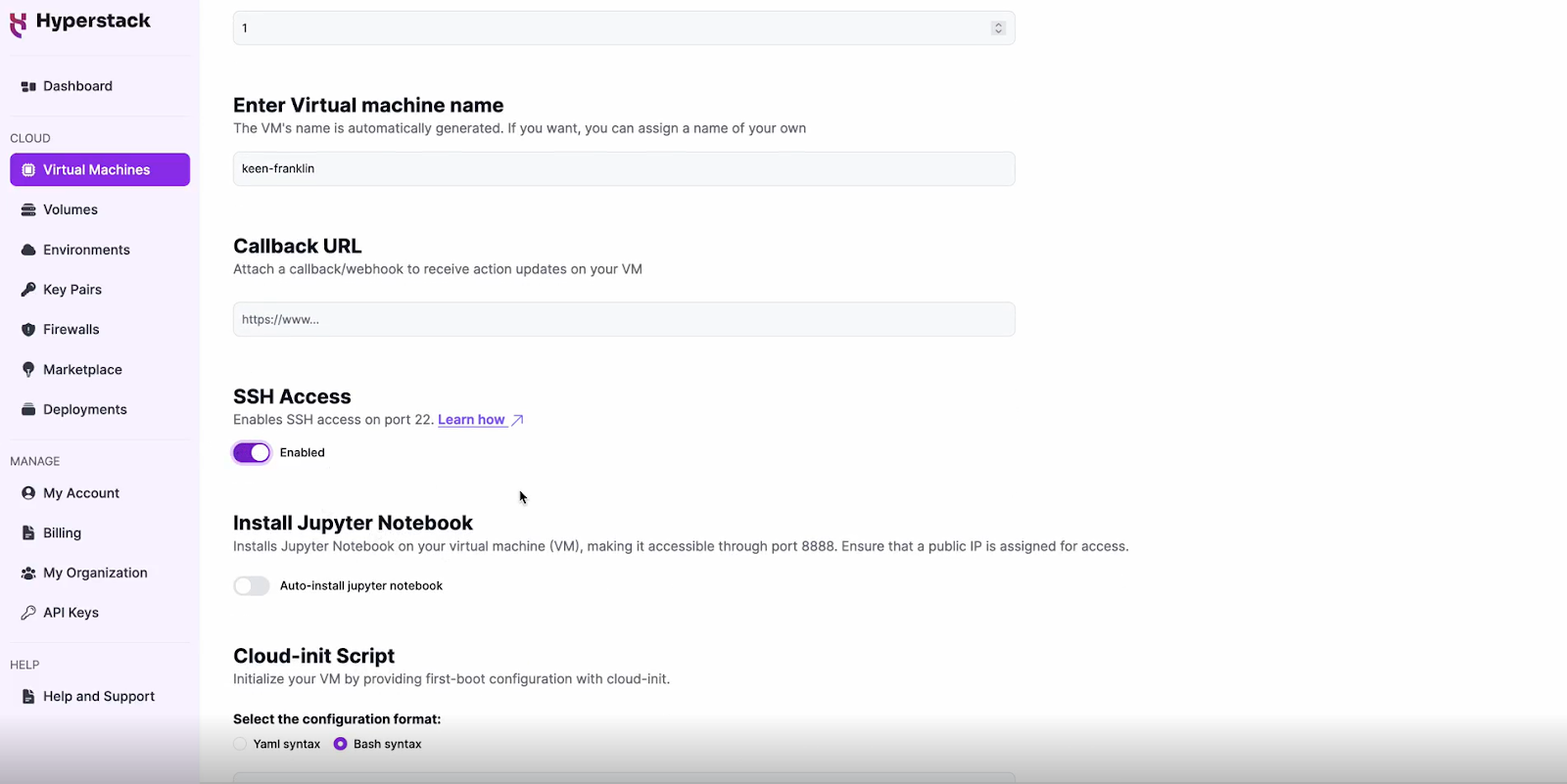

Enable SSH Access

- Make sure to enable an SSH connection.

- You'll need this to securely connect and manage your VM.

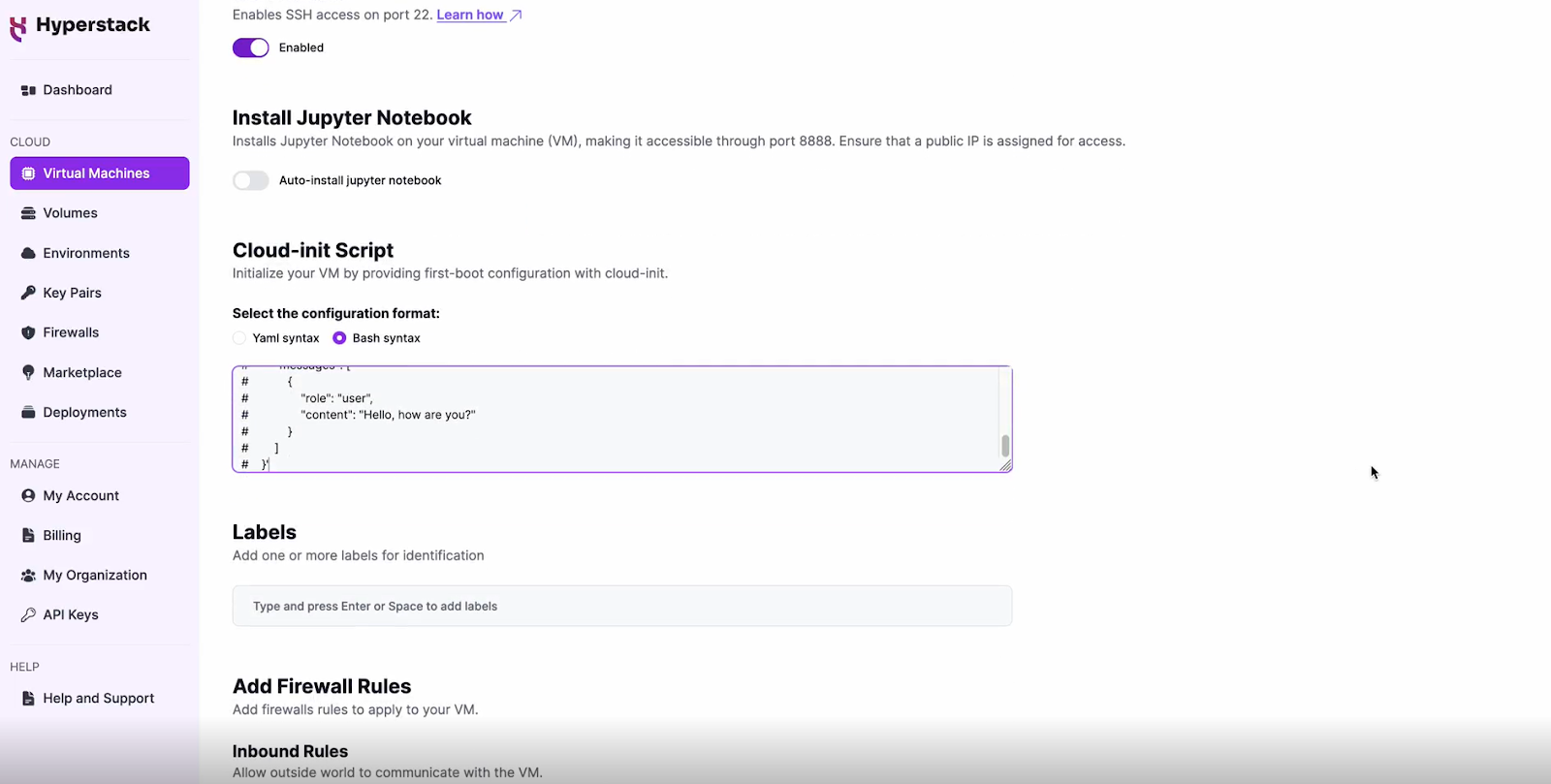

Configure Additional Settings

- Look for an "Additional Settings" or "Advanced Options" section.

- Here, you'll find a field for cloud-init scripts. This is where you'll paste the initialisation script. Click here to get the cloud-init script!

- To use the Llama 3.2 11B model you need to:

- Request access here: https://huggingface.co/meta-llama/Llama-3.2-11B-Vision-Instruct.

- Create a HuggingFace token to access the gated model, see more info here.

- Replace line 18 of the attached cloud-init file with your HuggingFace token.

Please note: this cloud-init script will only enable the API once for demo-ing purposes. For production environments, consider using containerization (e.g. Docker), secure connections, secret management, and monitoring for your API.

Review and Deploy

- Double-check all your settings.

- Click the "Deploy" button to launch your virtual machine.

Step 3: Initialisation and Setup

After deploying your VM, the cloud-init script will begin its work. This process typically takes about 5-10 minutes. During this time, the script performs several crucial tasks:

- Dependencies Installation: Installs all necessary libraries and tools required to run Llama 3.2.

- Model Download: Fetches the Llama 3.2 11B model files from the specified repository.

While waiting, you can prepare your local environment for SSH access and familiarise yourself with the Hyperstack dashboard.

Step 4: Accessing Your VM

Once the initialisation is complete, you can access your VM:

Locate SSH Details

- In the Hyperstack dashboard, find your VM's details.

- Look for the public IP address, which you will need to connect to your VM with SSH.

Connect via SSH

- Open a terminal on your local machine.

- Use the command ssh -i [path_to_ssh_key] [os_username]@[vm_ip_address] (e.g: ssh -i /users/username/downloads/keypair_hyperstack ubuntu@0.0.0.0.0)

- Replace username and ip_address with the details provided by Hyperstack.

Interacting with Llama 3.2 11B

To access and experiment with Meta's latest model, SSH into your machine after completing the setup. If you are having trouble connecting with SSH, watch our recent platform tour video (at 4:08) for a demo. Once connected, use this API call on your machine to start using the Llama 3.2:

IMAGE_URL="https://www.hyperstack.cloud/hs-fs/hubfs/deploy-vm-11-ecd8c53003182041d3a2881d0010f6c6-1.png?width=3352&height=1852&name=deploy-vm-11-ecd8c53003182041d3a2881d0010f6c6-1.png"

IMAGE_EXTENSION=$(echo "$IMAGE_URL" | awk -F. '{print $NF}' | cut -d'?' -f1)

FILE_NAME="/home/ubuntu/downloaded_image.$IMAGE_EXTENSION"

curl -o $FILE_NAME $IMAGE_URL

# Write the JSON payload to payload.json file

cat < payload.json

{

"model": "Llama3.2-11B-Vision-Instruct",

"messages": [

{

"role": "user",

"content": [

{

"image": {

"uri": "file://$FILE_NAME"

}

},

"Describe this image in two sentences"

]

}

]

}

EOF

# Use the JSON payload file in the curl command

curl -X POST http://localhost:8000/inference/chat_completion \

-H "Content-Type: application/json" \

-d @payload.jsonIf the API is not working after ~10 minutes, please refer to our 'Troubleshooting Llama 3.2 section below.

Troubleshooting Llama 3.2 11B

If you are having any issues, please follow the following instructions:

-

SSH into your VM.

-

Check the cloud-init logs with the following command: cat /var/log/cloud-init-output.log

- Use the logs to debug any issues.

Step 5: Hibernating Your VM

When you're finished with your current workload, you can hibernate your VM to avoid incurring unnecessary costs:

- In the Hyperstack dashboard, locate your Virtual machine.

- Look for a "Hibernate" option.

- Click to hibernate the VM, which will stop billing for compute resources while preserving your setup.

Why Deploy Llama 3.2 on Hyperstack?

Hyperstack is a cloud platform designed to accelerate AI and machine learning workloads. Here's why it's an excellent choice for deploying Llama 3.2:

- Availability: Hyperstack provides access to the latest and most powerful GPUs such as the NVIDIA H100 on-demand, specifically designed to handle large language models.

- Ease of Deployment: With pre-configured environments and one-click deployments, setting up complex AI models becomes significantly simpler on our platform.

- Scalability: You can easily scale your resources up or down based on your computational needs.

- Cost-Effectiveness: You pay only for the resources you use with our cost-effective cloud GPU pricing.

- Integration Capabilities: Hyperstack provides easy integration with popular AI frameworks and tools.

Explore our tutorials on Deploying and Using Granite 3.0 and Notebook Llama

on Hyperstcak.

Subscribe to Hyperstack!

Enter your email to get updates to your inbox every week

Get Started

Ready to build the next big thing in AI?